Attempts At Optimizing VGA Mode 13h BitBlts and Tilemap Rendering

As my last post indicated (which has been a while now, whoops!), I've been working on optimizing the [bitblt] (https://en.wikipedia.org/wiki/Bit_blit) routines in libDGL. I'm definitely no master of optimization and am not expecting to come up with anything revolutionary. In fact I'm sure I will goof things up a fair bit in the process and miss obvious avenues of optimization, heh. Every little bit of speed counts for the hardware I'm targeting with this project (486's and maybe 386's later on). Figured I'd share the results of some of my latest attempts, including the parts that didn't result in improvements.

Recently, I thought it would be a fun idea to do a pretty much 1:1 conversion of some old projects of mine written around the turn of the century. The idea would be to convert them to C and get them up and running with libDGL. The code in these projects was pretty terrible and I've no long-term intentions of extending them. However, I figured it would be interesting mainly just to see how fast I could get them running.

Even back then, I liked using obsolete development tools like QuickBASIC 4.5 which by 2000/2001 was definitely obsolete. At that time I was writing code on a AMD Duron 800MHz PC, so I was never too concerned with performance, even with QuickBASIC. Running this old QuickBASIC code today as-is on my 486 DX2-66, I see that it barely maintains 30-35 FPS. This code was built using DirectQB 1.61 (a great library for the time, used by a lot of games) with some improved tile/sprite bitblt routines by Rel since the original routines in DirectQB were known to be kind of slow.

Of course, just by doing a straight-up conversion to C we will get significant performance gains. QuickBASIC isn't exactly an optimizing compiler, heh. But I wanted to see just how fast we could get the basic tilemap rendering going.

Here's the original QuickBASIC code for drawing the tilemap:

SUB DrawMap

'Get camera position

CameraX = Engine.x - 160

CameraY = Engine.y - 96

'Make sure we aren't going to go off the map buffer

IF CameraX < 0 THEN CameraX = 0

IF CameraY < 0 THEN CameraY = 0

IF CameraX > Engine.MaxX - 320 THEN CameraX = Engine.MaxX - 320

IF CameraY > Engine.MaxY - 200 THEN CameraY = Engine.MaxY - 200

'Get the starting tile to draw at

xTile = CameraX \ 16

yTile = CameraY \ 16

'Get the pixel offset to draw at

xpos = CameraX MOD 16

ypos = CameraY MOD 16

'Now actually draw the map

FOR x = 0 TO 21

FOR y = 0 TO 14

'Get the tile numbers to draw

tile = map(x + xTile y + yTile).tile

tile2 = map(x + xTile y + yTile).tile2

xp = x * 16 - xpos

yp = y * 16 - ypos

'Draw the first layer

RelSpriteSolid 1 xp yp VARSEG(tilearray(0 tile)) VARPTR(tilearray(0 tile))

'Draw the second layer only if the tile isn't equal to 0

'(Tile 0 should be a blank tile, in which case we don't want to draw

'it because it'll slow the engine down)

IF tile2 <> 0 THEN

RelSprite 1 xp yp VARSEG(tilearray(0 tile2)) VARPTR(tilearray(0 tile2))

END IF

NEXT y

NEXT x

END SUB

So after doing a bunch of straightforward converting and getting the basic engine up and running, I ended up with the following C function. This is, for now, intended to be a 1:1 equivalent of the above code:

void

Definitely room for improvement here.

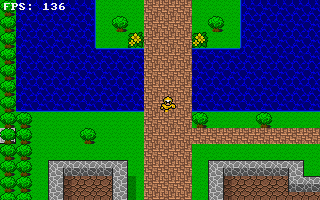

So how fast is it? Well, I was impressed actually. Mostly. There are two scenarios that are important to benchmark:

Fast scenario

Fast scenario

Slow scenario

Slow scenario

Just ignore the obviously ripped graphics. Younger-me couldn't draw pixel art, but I sure knew how to rip graphics from SNES ROMs! There was some great DOS-based tool I remember I really liked for doing it, but unfortunately I cannot recall the name of it. Anyway, Today-me still doesn't know how to draw pixel art, so we'll just continue using these ripped graphics for now.

So the difference between these two scenarios is the amount of "layer 2" tiles being drawn. You can see in the above

render loop code that a check is done for a non-zero tile2 value and then a call to surface_blit_sprite is done.

This of course draws the second layer tile with transparency to overlay tiles on top of the lower layer. As you can see

in the right screenshot, there are a ton of tree tiles being drawn and with the map that the engine is using for this

test, these are all on layer two (with layer one just being a simple grass tile).

Let's get the blatantly obvious problem out of the way first: There is NO need for the map to be laid out this way. We don't even need to touch code to see significant improvements. We just need to adjust the tileset and map so that we can skip the vast majority of the layer 2 tiles. If we needed a bunch of variations of tree tiles with different ground underneath them, then we might very well be better served with a bunch of different tree tiles in the tileset we use, each with the different ground needed. However, this map was what I put together in 2001 or so. For now we'll just stick with it and see what else we can do.

Also, I should mention that these two screenshots show the frame-rates at double-word aligned memory offsets. We'll come back to this later though as memory alignment is obviously an important topic.

So, the first thing that came to mind when looking at my freshly converted draw_map() function was to eliminate the

unnecessary clipping checks for 90% of the tiles being drawn in this render loop. Only tiles on the edge of the screen

actually need to be checked for clipping. We can do this very simply for now:

if else

surface_blit_f and surface_blit_sprite_f are "fast" versions that skip clipping checks, but otherwise work exactly

the same (in fact, surface_blit and surface_blit_sprite call the fast versions internally).

This gets us a little bit of an improvement. 147/148 FPS in the fast scenario, and 97/98 FPS in the slow scenario.

It's important to note here that in VGA mode 13h, the screen resolution is 320x200. We're using 16x16 tiles in our tilemap, so we end up needing to draw a minimum of 20 tiles horizontally, and 13 tiles vertically (where the last row at the bottom will only be half visible, since 200/16 = 12.5). In order to do pixel-by-pixel scrolling as in this tilemap rendering engine, we need to add one extra column and row to ensure we don't have any gaps anywhere along the edges at any point as the screen is scrolling. Because of the uneven vertical tile count (12.5) we actually need to do clipping for the bottom two rows.

We could of course add a check for y_offs >= 8 to determine if we actually need to draw that last row at all. Though

this obviously won't always improve performance, it would depend on how the screen is currently scrolled.

Anyway, the next thought I had was to improve the way that the map data was being accessed inside the loop. I didn't figure that this would make a big difference, but let's see:

// now actually draw the map

index = + x_tile;

tiledata = &map->tiledata;

tileset = map_tiles->tiles;

xp = -y_offs;

yp = -x_offs;

for

We get a very minor boost from this. 151/152 FPS in the fast scenario, and 98/99 FPS in the slow scenario. But it's something!

Well, now we have that x/y coordinate check that runs every iteration of the loop to see if we need to use clipped

blits or not. We know that 90% of the screen does not need clipped blits, so I decided to try spliting up the rendering

loop on this basis so that we don't need to run that check all the time. I ended up with 3 separate loops:

- The top and bottom row (for

y = 0,y = 12andy = 13only, remember two bottom rows can get clipped) - The left and right columns (for

x = 0andx = 20only) - Everything in-between.

In the end this gained me about 1 FPS, but it made the code significantly larger because there were three loops instead of one, and each of these included similar sets of calculations (but different enough that I did need to have three sets of them). Probably could have cleaned the code up a fair bit, but ultimately since this all made a tiny difference, I decided that the extra complexity just wasn't worth keeping. Perhaps I would want to revisit this later on once I see how this runs on a 386 CPU.

Next, I decided to take a closer look at what the actual surface_blit and surface_blit_sprite calls do. I've already

spent a bunch of time trying to optimize them and I'm sure there's still some stuff that can be done, but I'll start

off with them as they are today and not the much slower versions I had written a few months ago.

static void

static void

void

void

Alright, so as we can see, these aren't super interesting and we might as well just look at surface_blit_region_f and

surface_blit_sprite_region_f. We could likely optimize clip_blit. In fact, I don't even think I've tried to do this

at all ever. The existing implementation is a copy of some code I had written over 10 years ago if I recall correctly,

heh. However, let's just ignore it for now since the current tilemap function we have skips clipping for probably 90%

of the tiles being drawn.

static int

static byte*

void

I talked about this in my last post, but to recap, the idea here is that I figured there were three main scenarios for bitblts (post-clipping of course):

- The width of the blit is an even multiple of 4. In this case, we can simply do a

rep movsd' for each row. Very nice and efficient. - The width is some value larger than 4, but it is not an even multiple of 4 so we can split each row into a

rep movsdfollowed by arep movsb. - The width is some value < 4. We can just do a

rep movsb.

The most common scenario when dealing with "typical" game graphics would be the first scenario. In our case, we are using 16x16 tiles and sprites, so definitely this will be the case for us. The remaining two scenarios would primarily occur for partially clipped blits, so these two would not be what would get run for the vast majority of blits.

It's worth pointing out that I didn't start with a blit function implementation that had these three scenarios. I

started with a simple one that just did rep movsb for each row of pixels. Once I saw how that performed I then

thought about it and came up with the three scenario idea. I then saw that, indeed, this way performed much better.

Having said that, I'm sure it's been implemented better by smarter people then me decades earlier.

The direct_blit_xxxx calls are implemented in assembly and are relatively simple:

void

void

void

Some compiler/toolchain-related things to note here first before going on:

- I'm using Watcom C 11.0 for this. Thus, I can make use of the nice

_asmblock inline assembly support. However, as I demonstrated in my previous post, I had run into what looked like a compiler bug with Watcom's_asmsupport. After playing with it some more, I noticed I got good results by just moving all my_asmblocks to their own functions. This is kinda-sorta-maybe in some ways like using externally linked assembly functions via something like TASM or MASM. At least, as far as it just being a function call instead of slapped somewhere else inline in some block of C code. Kind of a nice separation, especially for these blit functions and it both fixes the problem I had run into and means I don't have to worry much about setting up a proper calling convention in whatever assembler I would otherwise be using (which would involve some icky#pragmausage). Which leads me into the next point ... - Watcom's default calling convention uses registers for the first 4 arguments (well, at least for 32-bit values).

eax,edx,ebx, andecxin that order. For any remaining arguments, the stack is used as per normal C calling convention. Because I'm using a pattern of putting my larger_asmblocks in non-static functions all by themselves, I can "hijack" this calling convention easily enough and skip some additional stack copying that Watcom would generate if I used the argument variable names for those first 4 arguments. This is probably kind of a dirty hack, but it seems to work well, and that's why you'll notice in these assembly functions that I don't seem to ever reference the first 4 arguments. I do, but they are already in registers.

I'm going to go ahead and claim that these are good enough. I mean, they've basically been reduced to a loop of rep movsd's in the best and by far most common case. I don't think it gets much better than that. I'm sure there's some

optimization guru reading this that is face-palming after having read that statement and noticed some dumb thing I did

in the code, but hey, I did say above that I'm no expert!

I did play with using ebp as well in direct_blit_4 since that function is called the most out of the three. Using

ebp as a general purpose register in that function allows me to not use any values from the stack at all once inside

the loop, so I figured it would be worthwhile. But it barely made any noticeable difference on my 486. It would

probably be worth testing on a 386 though to see the difference, but I'll wait until I have an actual 386 to test with.

For now, I decided to leave this optimization out. Using ebp in this way is tricky, as once you change it like this

you cannot access anything using your variable names (the compiler, or assembler, replaces them with addresses relative

to ebp). You could still access the stack using addresses relative to esp, but I decided not to go down this road

at this time.

Alright, well, since the slowdowns really seem to be regarding the surface_blit_sprite calls in our draw_map () function, let's look at it. The core of it (as indicated by code shown previously) is implemented in

surface_blit_sprite_region_f:

void

Immediately, we can see it's a bit different from surface_blit_region_f, but at its core it's the same basic

three-scenario implementation. It's just been extended to also look for an even multiple of 8 and to call a slightly

different assembly function for those cases.

Again, this was something that I initially didn't do for the first implementation of this function. I originally had a simple loop (written entirely in C code) that checked one pixel each iteration and if non-zero would draw it. Nice and simple. I then decided to try unrolling the loop and do 4 pixels per iteration, still only in C code. This gave a significant improvement, so I decided to try adding an extra 8-pixel-per-iteration version and saw an improvement again but not as significant this time. Still, it was enough that I thought it warranted keeping it.

Watcom's optimizer actually did a pretty damn good job with my C code version. I was able to tweak it slightly to help the optimizer out, but eventually decided that it was probably better to write it in assembly anyway. This is because it seemed rather easy to make what seemed like an extremely minor change to the code that would result in the optimizer generating some real inefficient block(s) of code.

Anyway, here are the direct_blit_sprite_xxxx functions:

void

void

void

void

void

As you may have been able to imagine before even seeing this code, these are very much implemented in the same general

way as the non-transparent blits are. Instead of rep movsd and we have unrolled loops that must check each and every

pixel for transparent pixels before drawing. Same for rep movsb, except the loops that replace these aren't

unrolled.

After initially writing these assembly sprite blit routines, I started to look for ways that I might make the unrolled

loop section faster. I began by trying to reduce the number of memory reads by reading 16-bits instead of 8-bits at a

time. Then I would only need to read pixels every other time and could access two pixels by using bl and bh.

draw_px_0:

mov bx, [esi+0] ; load two pixels at once

test bl, bl

jz draw_px_1 ; if this pixel is color 0, skip it

mov [edi+0], bl ; otherwise, draw it onto dest

draw_px_1:

test bh, bh ; don't need to read, second pixel is already in bh

jz draw_px_2

mov [edi+1], bh

On my 486 this resulted in barely any noticeable difference. Maybe 1 FPS of an improvement for the slow scenario. I

don't have a 386 to test with, but from looking at some instruction timing information, I'm guessing this should be

an improvement on a 386 processor. mov reg, mem takes 4 clock cycles on a 386, versus 1 clock cycle on a 486, so by

removing some of these operations we should save some time anyway.

The other thing I was curious about was using bswap. This instruction was added starting with 486 processors, so if I

wanted to support 386's I couldn't use it anyway, but even still, I just wanted to try it. What bswap does is reverse

the byte order of a 32-bit register. This can be used as a means to access the upper 16-bits of a 32-bit register,

which you otherwise wouldn't be able to do independently since x86 architecture doesn't provide you with any 8/16 bit

registers for the upper half. With this in mind I figured I would be able to do something like:

draw_px_0:

mov ebx, [esi+0] ; load 4 src pixels

test bl, bl

jz draw_px_1 ; if it is color 0, skip it

mov [edi+0], bl ; otherwise, draw it onto dest

draw_px_1:

test bh, bh ; don't need to read, second pixel is already in bh

jz draw_px_2

mov [edi+1], bh

draw_px_2:

bswap ebx ; swap bytes. bh now has this pixel, bl is the next

test bh, bh

jz draw_px_3

mov [edi+2], bh

draw_px_3:

test bl, bl

jz draw_px_4

mov [edi+3], bl

However to my surprise, this made no noticeable difference again. Anyway, doesn't really matter much since I could not

use this on a 386. Using shr as an alternative method to access the upper 16 bits is no good. It's too expensive to

use for something like this. shr reg, imm is 2 clock cycles on a 486 and 3 clock cycles on a 386, whereas bswap

runs in only 1 cycle.

There might be some other improvements that can be made here, but nothing came to mind so I figured I'd move on.

Looking back at my draw_map() function, I figured why not call the direct_blit_xxxx and direct_blit_sprite_xxxx

assembly functions directly? We can't do that for the tiles around the edges of the screen that need to be clipped, but

we should absolutely be able to do this for the inner 90% of the tiles that are drawn. As an added benefit, since we're

using 16x16 tiles, we know that we can always just call direct_blit_4 and direct_blit_sprite_8. All we need to do

is manage all the source and destination memory parameters ourselves directly instead of x and y coordinates.

Probably won't be a large boost, but we'll see.

// now actually draw the map

index = + x_tile;

tiledata = &map->tiledata;

tileset = map_tiles->tiles;

yp = -y_offs;

xp = -x_offs;

pdest = ;

for

(Obviously no need to worry about the multiplications and divisions you now see in the above code. They're all based

entirely on constants, except for one * 2 which will become a shl anyway by the optimizer.)

At this point, the draw_map() function implementation is starting to look kind of messy to me. However, the

improvement this brings is small but noticeable. Up to 159/160 FPS in the fast scenario and 105 FPS in the slow

scenario.

The last thing I wanted to look at was if we could help mitigate the performance drop that occurs when the screen is

scrolled to some position that results in us drawing all of the visible map tiles at unaligned memory addresses. In

32-bit protected mode (as I am using), we want to be aligned to a 4-byte boundary. So that means that, right now,

beginning a blit at three out of every four x coordinate values across the entire width of the screen will make the

blit unaligned.

How big a deal is this? Well, if I adjust the x coordinate of each of our two scenarios by one pixel (in either

direction), we end up getting 135 FPS in the fast scenario and 94 FPS in the slow scenario. So, it's a big enough deal

that we should at least see if we can do something to lessen the blow. This is a pixel-by-pixel scrolling engine after

all, so these unaligned offsets will occur frequently.

I was not really too optimistic that I would be able to improve things with my current knowledge/experience. Admittedly, I've never really written code that tries to deal with memory alignment. As I understand it, the main slowdown is on the writes and, indeed, this is where the source of unaligned memory accesses will be for us.

We need not worry about our source tile graphics in this case, as the tiles in a typical tileset (including the one

we're using here) will all be some even number like 16x16 or 32x32 and will either have each tile in their own

allocated block of memory, or all tiles will be arranged in a grid on some larger allocated block of memory (but in

this case, accessing an arbitrary tile in such a grid will result in some 4-byte aligned address anyway). For memory

allocations, malloc() is likely ensuring that the allocation is aligned, so no worries there. It's important to note

that libDGL's blit routines allow using an arbitrary region as the blit source, and this region could be located at

an unaligned address. I suspect that this wouldn't be a common use case, so I chose to ignore it.

So after thinking about it a bit I figured that I would start with trying to optimize surface_blit_region_f first and

ignore sprite/transparent blits for now (unsure what I can really do there to be honest).

I decided that I would try calculating the number of bytes at the start of each line in the blit that are before the

next 4-byte boundary. Then I could do a rep movsb up to this next boundary. Following this, I could proceed with the

remainder of the line like normal (that is, with a single rep movsd or a combo of rep movsd and rep movsb as

appropriate based on our existing method).

However, I suspected this would be slower then just not dealing with memory alignment at all. rep movsb takes 12+3n

clock cycles on a 486, and we're talking about adding an extra one in some cases. But anyway, I went ahead with it

just to see. Here are the modifications I made to surface_blit_region_f:

// ...

psrc = ;

pdest = ;

lines = src_height;

bytes_from_boundary = & 3;

if else

// ...

Two new functions were added, direct_blit_4u and direct_blit_u4r:

void

void

In order to test this out in the draw_map() function, I decided that for now it would be simpler to revert back to

just calling the normal surface_blit_xxxx functions. That way I don't need to clutter up that code even more then

it already is with calculations to determine what direct_blit_xxxx function needs to be called based on the x

coordinate. It'll run a little bit slower this way, but it doesn't matter, I just want to see if it's faster or not.

And as expected, it ended up being slower! By about 20 FPS in the fast scenario and 10/11 FPS in the slow scenario. I

would guess that this would get better if I was doing larger blits, but probably with the tile size I am using the cost

of an extra rep movsb is just not worth it.

Ultimately what I learned most from this experience is that I need to do more reading up on the subject, heh. I would not be surprised at all if I was missing something obvious here.

On the whole, this entire optimization exercise was useful and even though trying to address memory alignment didn't produce any results, I was able to improve overall performance. It would be interesting to compare results on a 386 at some point too, but that will have to wait.